Since COVID began to dominate the news, I’ve seen a lot of reporting based on modeling. I’d never have expected this topic to become so mainstream or so important to public policy debate, but I’m glad it is.

However, I see some horrible and deceptive reporting and claims by politicians about various modeling results. I thought it could be helpful to lay out some basics and then talk about how politicians and press on both sides are misreading things.

TLDR:

- Models are just really good, formalized theories

- As theories, they are only as good as the amount of testing and refinement they’ve undergone

- When someone write that “a model shows”, translate it to “a theory says”. Model output isn’t data.

- All sides – left and right, deniers and maskers – are guilty of thinking model output “proves” something. It can’t.

What’s a model?

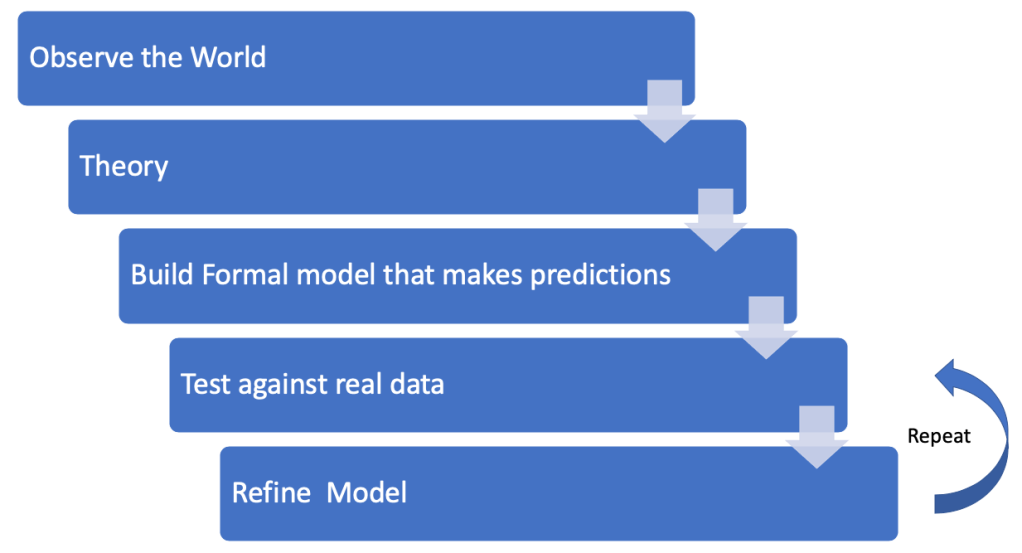

Lots of people are talking about models as though they are data, or verified information. When you hear someone mention a model, replace that term with “formalized theory”, because that’s all they are. The research process looks like this:

First, you look around the world and observe. You see that when you drop things, they fall down. Your theory is that everything will fall when dropped. That’s great. But it isn’t very specific.

It’s time to build a formal model. This will require you to think with great specificity and make very clear what your model is. So if you’re Galileo (please forgive some violence to the history of science here), you formulate:

Acceleration due to Gravity = -9.8m/s2

(things accelerate down at 9.8 m/s/s)

Now this is an extremely specific set of predictions we can test. We can drop some objects from high places and see if they’re truly going the predicted velocity at the bottom. It even makes some surprising predictions, like that heavy and light objects will fall at the same rate because the equation doesn’t have mass as a parameter. The model passes!

Note that the graphic above doesn’t have an exit – it’s stuck in an endless loop at the end between testing and refining. Newton, for example, figured out that this is model is just a special case that works at sea level with negligibly small objects vs. the Earth; a more universal law of gravitation:

F = GM1M2/r2

(Force = G * mass1 * mass2 / distance between the objects squared)

Now your model that describes falling objects describes the orbits of the planets around the sun.

Later, this had to be refined by Einstein to account for very high gravity wells, speeds, time, light, and other niceties.

The key point here is that the “law” of gravitation is just a mathematical model, a theory. It predicts what you’ll get if you measure, but it’s not data, and it’s not truth.

But all models are not created equal. Ones that have been formulated, tested, refined, tested again, tested again etc… become extraordinarily useful, while new ones that are relatively unproven must be taken with a grain of salt.

The Newtonian model of gravitation is a really incredible model. It’s incredibly simple compared to how much it explains. Once formulated to explain how balls roll down ramps, it was used to explain the motion of the planets in the sky, to predict ballistic shell paths, and plot a path from the moving earth to the moving moon over millions of miles. With just three parameters!

Epidemiology is vastly more complex, and not deterministic. You’re trying too predict what will happen with trillions of viri interacting with millions of people in different situations (indoor/outdoor, warm/cold, masks/no masks, distancing/not distancing). So the models here have to be statistical, or stochastic. this means we truly can’t predict any one case, but hope to predict what’s going to happen when you average enough cases. The IHME model can’t tell you if YOU will get the virus, but it tries to predict how the virus will spread, how many cases, and how many deaths a population will experience given their age, weather, behavior, susceptibility, etc. There are lots of parameters.

Now let’s look at two cases out there that try to use epidemiological models like IHME to “prove” things.

Did Trump double US deaths by delaying a week?

For example: https://slate.com/news-and-politics/2020/05/new-model-shutting-down-one-week-earlier-cut-deaths-fatalities.html

A “model” by scientists “finds” that shutting down earlier could have cut deaths by half. What this really means is someone ran a model like the IHME model with the assumption we’d shut down a week earlier, and got a prediction – that it would have saved half of the lives. And in the great telephone game of the press and politics, this has turned into “Trump killed twice as many people for each week he delayed responding”.

Remember, it’s not a model, it’s a theory. So the headline should probably read that “scientists’ formal theory predicts shutting down a week earlier would have saved half of the lives“. Is that theory right? Maybe. Maybe even probably, given that these models have been around for a while and tested against real world cases. But they simply can’t prove what would have happened with the kind of precision people are thinking.

The Right Does it Too – UK Herd Immunity

Recently, a number of UK publications reported that Levels of herd immunity in UK may already be high enough to prevent second wave, study suggests. That’s exciting – did this study go take blood samples, or expose a random sample of UK citizens to the virus?

Sadly, no. They just developed a theory that a sizable number of UK citizens might already have immunity to the virus without getting it. They assumed that if, say 40% already had varying levels of immunity, then only around 20% of people would need to get the virus to achieve a total 50% exposure and “herd” immunity.

The study simply “showed” this by feeding this new data – that 40% are already immune – into a conventional model like the IHME model. And OF COURSE if you start out with 40% immune, you get to 60% faster. No modeling needed, really. In fact, I’d predict that if you started with 100% immunity in the population, the pandemic would stop immediately! No model needed, and I”m pretty sure it’s true.

The theory that many people are already immune is a fascinating one, and might explain some aberrant results from Sweden, where only 5% of the population has been exposed but cases are dropping. But there’s no data to support this idea – it’s back in the “formal theory that makes predictions” stage, waiting for an actual test to see if it’s true.

But again, in the telephone game of the press and especially social media, people are telling me that a “study” from the UK “supports” what southern states are doing by letting the virus rage through their populations unopposed. Far from being support, those states are in fact tests of the theory. If 40% of Florida, Texas, and Arizona are already immune, their exponential spikes will soon top out and cases and deaths will end, returning back down to negligible numbers. If not, then the theory has been disproven. We shall see.

Model output isn’t data. Running your model and getting output doesn’t produce data, it produces predictions – which must then be tested with data.